For patients with unresectable stage III non-small cell lung cancer, concurrent chemoradiation therapy remains the curative-intent backbone of treatment. The addition of adjuvant durvalumab after chemoradiation transformed outcomes after the PACIFIC trial, where median overall survival reached 47.5 months with durvalumab compared with 29.1 months without it (van Rossum et al., 2026). Yet not every patient appears to benefit equally. One possible reason is radiation-induced lymphopenia, a common and clinically important consequence of thoracic chemoradiation.

The investigators developed and externally validated a pretreatment model to predict severe radiation-induced lymphopenia before concurrent chemoradiation begins. Even more importantly, they explored whether predicted risk of severe lymphopenia was associated with the survival benefit of adjuvant durvalumab. Their findings suggest that a simple pretreatment risk model may help identify which patients are more likely to maintain immune fitness during chemoradiation and, therefore, may be better positioned to benefit from consolidation immunotherapy (van Rossum et al., 2026).

Why Radiation-Induced Lymphopenia Matters

Durvalumab works by blocking the PD-L1 axis and enhancing antitumor T-cell activity. That means its efficacy depends, at least in part, on the presence of functional lymphocytes. Severe radiation-induced lymphopenia, which often develops late during concurrent chemoradiation, has already been associated with worse outcomes in lung cancer and with reduced immunotherapy efficacy in prior studies cited by the authors (van Rossum et al., 2026).

This creates a practical problem. If severe lymphopenia only becomes obvious during or near the end of treatment, the opportunity to adapt radiation fields, modify dose distribution, or consider lymphocyte-sparing strategies may already be limited. A pretreatment model could change that by identifying patients at high risk before treatment starts. That is the key concept behind this study (van Rossum et al., 2026).

Study Design and Patient Population

The investigators assembled a retrospective development cohort of 451 patients with unresectable stage II or III NSCLC treated with concurrent chemoradiation between 2010 and 2019, with some patients also receiving durvalumab from 2018 onward as the standard of care evolved. To improve the robustness of the model, they combined consecutive real-world patients with patients treated on a randomized controlled trial at the same institution, increasing variation in fractionation and dose distribution patterns. This broader treatment heterogeneity was intended to improve generalizability and reduce overfitting (van Rossum et al., 2026).

The external validation cohort included 130 additional patients from three European centers treated between 2016 and 2022. These patients also underwent concurrent chemoradiation, with or without adjuvant durvalumab. Across the validation cohort, 105 patients, or 81%, received photon therapy and 25 patients, or 19%, received proton therapy. Most patients received 30 fractions or fewer, and platinum-based doublet chemotherapy was standard (van Rossum et al., 2026).

The outcome of interest was severe radiation-induced lymphopenia. Instead of using only the conventional Common Terminology Criteria for Adverse Events cutoff of grade 3 or higher lymphopenia, the authors used a data-driven threshold chosen to best separate survival outcomes in exploratory analyses. Severe lymphopenia was defined as an absolute lymphocyte count nadir below 0.24 K/mL during concurrent chemoradiation (van Rossum et al., 2026).

How Common Was Severe Radiation-Induced Lymphopenia?

In the development cohort, 164 of 451 patients, or 36%, developed severe radiation-induced lymphopenia using the study-defined threshold of ALC nadir below 0.24 K/mL. In the external validation cohort, 41 of 130 patients, or 32%, developed severe lymphopenia, showing a similar event rate across institutions (van Rossum et al., 2026).

The authors also reported a sensitivity analysis using the more familiar CTCAE grade 3 threshold of ALC nadir below 0.5 K/mL. By that definition, 374 of 451 patients in the development cohort, or 82.9%, developed grade 3 or higher lymphopenia. That very high frequency was part of the reason the authors chose a lower, data-derived cutoff for model development, allowing better separation between high- and low-risk groups (van Rossum et al., 2026).

Which Pretreatment Factors Predicted Severe Lymphopenia?

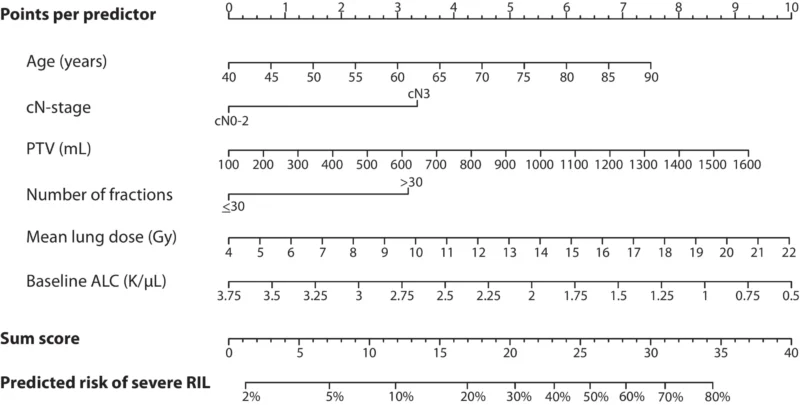

The final multivariable model identified six independent pretreatment predictors of severe radiation-induced lymphopenia. These were older age, cN3 nodal stage, larger planning target volume, more than 30 radiation fractions, higher mean lung dose, and lower baseline absolute lymphocyte count (van Rossum et al., 2026).

The specific adjusted effects were clinically intuitive. For every 10-year increase in age, the odds of severe lymphopenia increased, with an adjusted odds ratio of 1.27. Patients with cN3 disease had an adjusted odds ratio of 1.70 compared with cN0-2 disease. Larger planning target volume also mattered, with an adjusted odds ratio of 1.10 for every additional 100 mL. Receiving more than 30 fractions carried an adjusted odds ratio of 1.66. Higher mean lung dose increased risk as well, with an adjusted odds ratio of 1.09 per Gy. In contrast, higher baseline absolute lymphocyte count was protective, with an adjusted odds ratio of 0.61 per 1.0 K/mL increase (van Rossum et al., 2026).

These predictors make biologic sense. Older age and lower baseline lymphocyte count likely reflect reduced immune reserve. More advanced nodal disease, larger target volumes, higher mean lung dose, and longer fractionation schedules likely expose larger blood pools, lymphoid structures, and marrow compartments to radiation, increasing the chance of lymphocyte depletion (van Rossum et al., 2026).

Model Performance

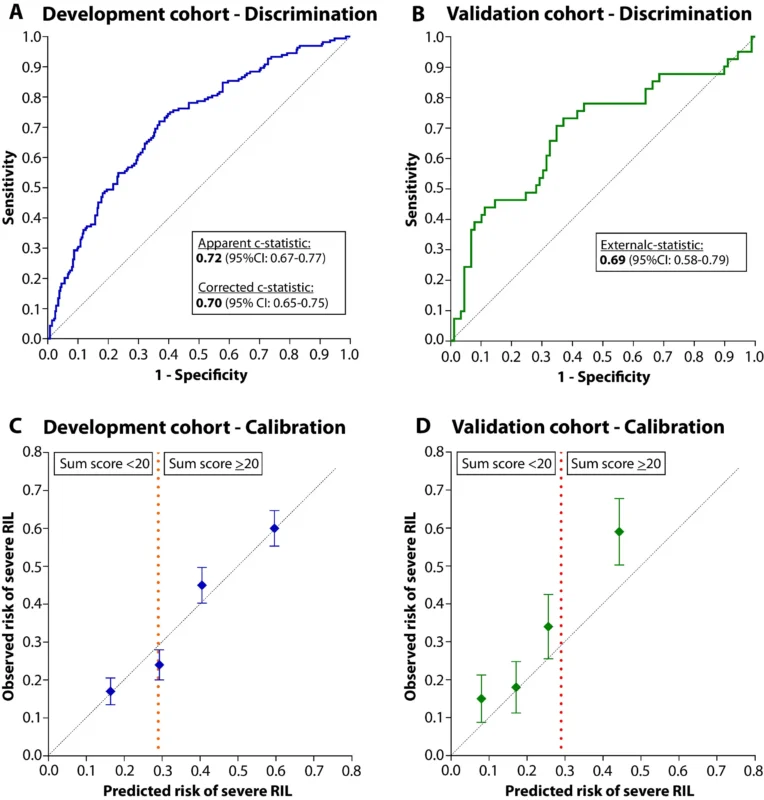

The pretreatment model performed consistently across the development and validation cohorts. In the development cohort, the corrected c-statistic after internal validation was 0.70, indicating fair discrimination. Calibration was also good, with observed risks closely matching predicted risks across quartiles. Mean predicted risks of severe lymphopenia were 16%, 29%, 41%, and 60%, corresponding to observed rates of 17%, 24%, 45%, and 60% (van Rossum et al., 2026).

In the external validation cohort, the c-statistic was 0.69, which is very close to that seen in development. Calibration was more modest in the validation set, with some underestimation of risk in the highest quartiles, but overall discrimination remained stable. That consistency across institutions supports the idea that this pretreatment risk model is not merely a single-center statistical artifact (van Rossum et al., 2026).

The authors also converted the model into a nomogram with a total score ranging from 0 to 40, allowing simple bedside or clinic-based risk estimation before treatment starts (van Rossum et al., 2026).

Observed Severe Lymphopenia and Outcomes

Before moving to the durvalumab analysis, the study first confirmed that observed severe lymphopenia itself was linked to worse outcomes. Compared with patients without severe lymphopenia, those with observed severe lymphopenia had worse progression-free survival, with a hazard ratio of 1.55, and worse overall survival, with a hazard ratio of 1.89 (van Rossum et al., 2026).

This is important because it reinforces that radiation-induced lymphopenia is not just a laboratory abnormality. It appears clinically meaningful and may be closely tied to how well patients ultimately do after chemoradiation.

Predicted Lymphopenia Risk and Durvalumab Benefit

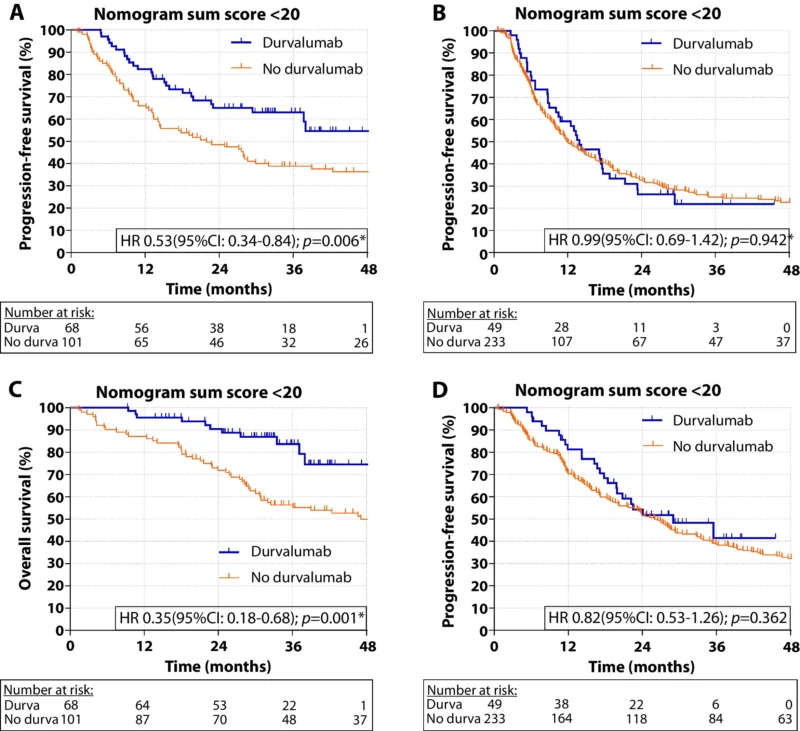

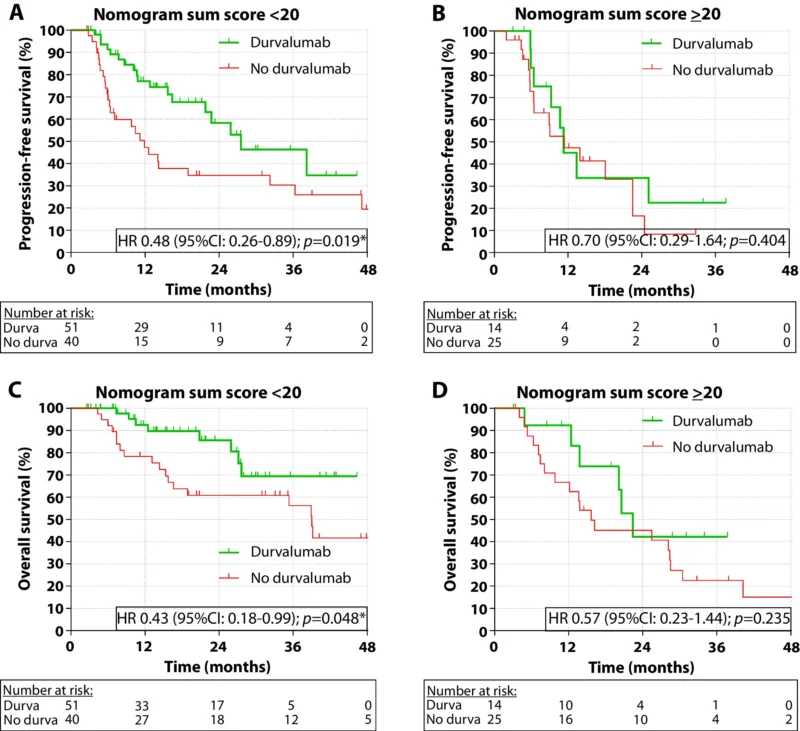

The most provocative finding in the paper was the exploratory analysis of durvalumab benefit according to predicted risk of severe lymphopenia. The authors divided patients into low-risk and high-risk groups using a nomogram score cutoff of 20.

In the development cohort, 169 patients had a low predicted risk score below 20. Among them, only 33 patients, or 19.5%, actually developed severe lymphopenia. Durvalumab was given to 68 patients in this low-risk group, or 40%. In these patients, durvalumab was associated with significantly improved progression-free survival, with a hazard ratio of 0.53, and significantly improved overall survival, with a hazard ratio of 0.35 (van Rossum et al., 2026).

By contrast, 282 patients had a high predicted risk score of 20 or greater. In this group, 131 patients, or 46.5%, developed severe lymphopenia. Only 49 patients, or 17%, received durvalumab. Here, durvalumab was not associated with a statistically significant improvement in progression-free survival, with a hazard ratio of 0.99, or in overall survival, with a hazard ratio of 0.82 (van Rossum et al., 2026).

That same pattern reappeared in the external validation cohort. Among 91 low-risk patients, durvalumab was associated with improved progression-free survival, with a hazard ratio of 0.48, and improved overall survival, with a hazard ratio of 0.43. Among 39 high-risk patients, no statistically significant improvement was seen with durvalumab, with hazard ratios of 0.70 for progression-free survival and 0.57 for overall survival (van Rossum et al., 2026).

This repeated signal across both cohorts is what makes the study especially interesting. The association between durvalumab and improved outcomes appeared concentrated in patients with a low predicted risk of severe lymphopenia.

Landmark Analysis Strengthened the Signal

Because retrospective comparisons of durvalumab use can be vulnerable to immortal-time bias, the authors also performed a 1-month post-chemoradiation landmark analysis. This sensitivity analysis included only patients who were alive and progression-free at least one month after concurrent chemoradiation. The findings remained similar. Durvalumab continued to be associated with improved progression-free and overall survival in the low predicted-risk group, but not in the high predicted-risk group (van Rossum et al., 2026).

Although this does not eliminate all confounding, it does make the observation more credible.

What Could Explain This Pattern?

The authors propose a biologically plausible explanation. Severe lymphopenia may impair both the quantity and quality of immune reconstitution after chemoradiation. That could mean fewer available T cells, less T-cell receptor diversity, and weaker tumor antigen recognition. Since durvalumab depends on functional lymphocytes to restore antitumor immunity, severe lymphopenia may reduce the treatment’s effectiveness (van Rossum et al., 2026).

The pretreatment model may therefore be doing something clinically useful: integrating immune reserve, tumor burden, and expected radiation exposure into one score that identifies patients more likely to preserve enough lymphocyte function to benefit from adjuvant immunotherapy.

Why This Matters for Radiation Oncology

This study also has practical implications for treatment planning. Because the model uses only pretreatment variables, it opens the door to proactive lymphopenia-mitigating strategies before chemoradiation begins. These could include reducing planning target volume where appropriate, lowering mean lung dose, considering fewer fractions when clinically feasible, and exploring technologies that reduce integral dose.

The authors discuss proton therapy, adaptive radiation therapy, and other lymphocyte-sparing concepts as possible future approaches. In the current data, no clear difference between photons and protons emerged in the development cohort, but they note that more modern intensity-modulated proton therapy could offer additional benefit and deserves further study (van Rossum et al., 2026).

Limits of the Current Evidence

This is not a randomized biomarker trial, and the authors are careful to say so. The study is retrospective, and the severe lymphopenia threshold was selected in a data-driven way informed by outcome separation. That means the durvalumab-related findings should be considered exploratory and hypothesis-generating, not definitive proof that high-risk patients do not benefit from durvalumab (van Rossum et al., 2026).

In addition, certain potentially important variables, such as PD-L1 status and some oncogenic drivers, were not available for all patients. The validation cohort was also modest in size, especially in the high-risk subgroup, which limits precision (van Rossum et al., 2026).

Still, the consistency of the pattern across two independent cohorts gives the findings weight and strongly supports prospective validation.

The Bottom Line

Van Rossum and colleagues developed and externally validated a pretreatment model for severe radiation-induced lymphopenia in unresectable stage III NSCLC treated with concurrent chemoradiation. The model uses six pretreatment variables, age, cN stage, planning target volume, number of fractions, mean lung dose, and baseline lymphocyte count, and showed fair, consistent performance across cohorts, with c-statistics of 0.70 and 0.69 (van Rossum et al., 2026).

More importantly, in exploratory analyses, durvalumab was associated with significantly improved progression-free and overall survival in patients with a low predicted risk of severe lymphopenia, whereas no statistically significant association was observed in those with a high predicted risk. If confirmed prospectively, this approach could help personalize both radiation planning and adjuvant immunotherapy decisions in stage III NSCLC (van Rossum et al., 2026).

You can read full article here