Accurate PD-L1 scoring remains central to treatment decision-making in non-small cell lung cancer (NSCLC), particularly in selecting patients for immunotherapy. However, variability in pathologist interpretation and differences across assays have long posed challenges.

New data presented at this session revisit the landmark Blueprint PD-L1 program, this time through the lens of artificial intelligence, evaluating whether AI-assisted scoring can match or even improve the reproducibility of human assessment.

Background

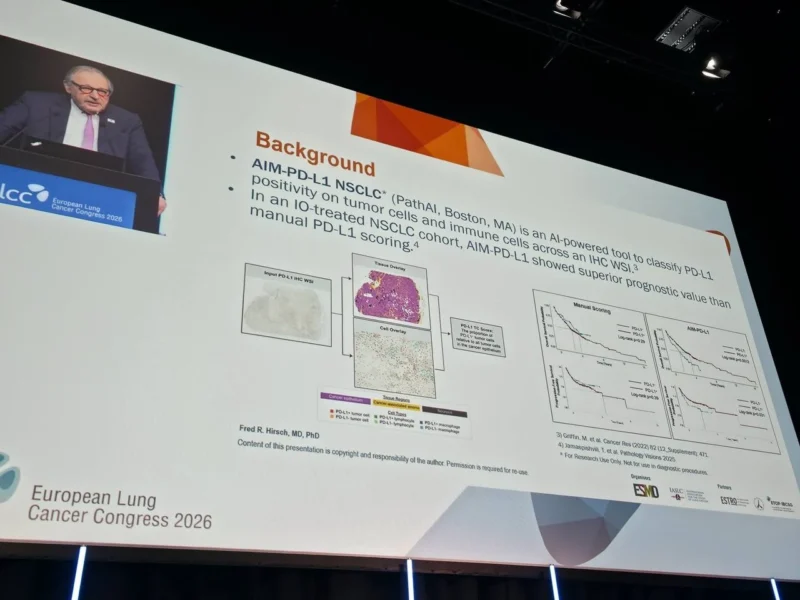

The original Blueprint studies compared major PD-L1 immunohistochemistry (IHC) assays, including 22C3, 28-8, SP142, and SP263, and highlighted both concordance and variability across platforms.

Since then, AI-based image analysis tools have emerged with the potential to standardize PD-L1 scoring by reducing interobserver variability and enabling high-throughput analysis.

This study aimed to validate an AI-based PD-L1 scoring algorithm against the original Blueprint dataset, directly comparing its performance with that of expert pathologists.

Methods

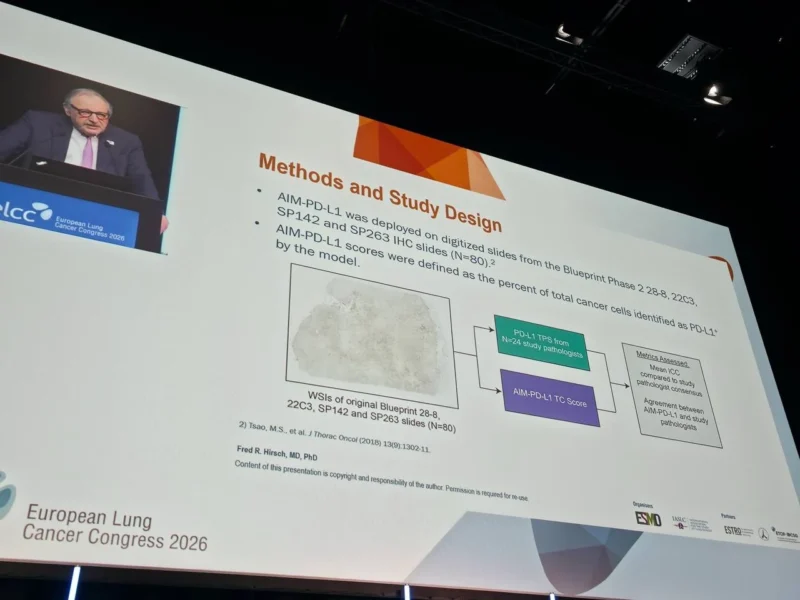

A total of 80 PD-L1-stained slides from the original Blueprint studies were digitized and analyzed using the AIM-PD-L1 NSCLC algorithm.

The AI model quantified PD-L1 expression as the percentage of tumor cells staining positive, mirroring the tumor proportion score (TPS) used in clinical practice.

These results were compared against TPS values assigned by 24 study pathologists. Agreement and reproducibility were assessed using intra-class correlation coefficients (ICC), along with standard agreement metrics including overall, positive, and negative percent agreement.

Results

Reproducibility and Concordance

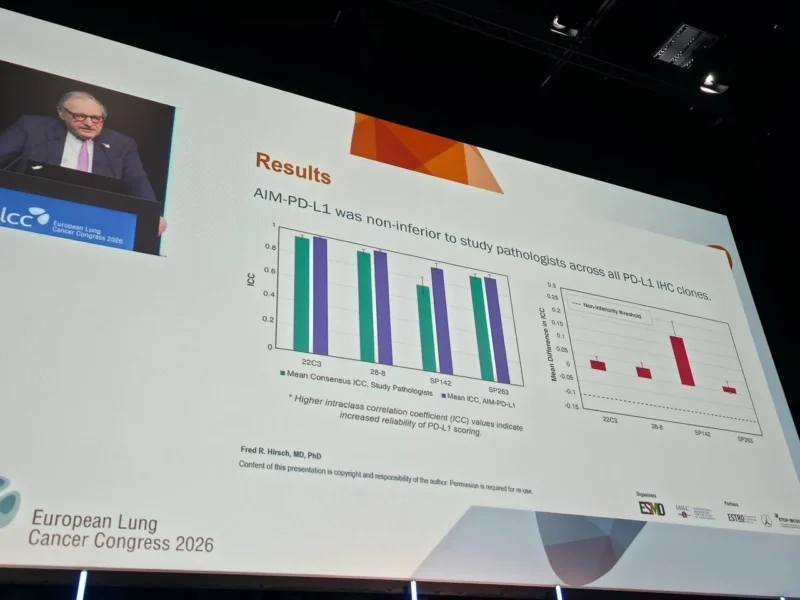

The AI algorithm demonstrated strong performance across all PD-L1 assay clones and met criteria for non-inferiority compared with pathologists.

Notably, reproducibility was consistently higher with AI:

- For the 22C3 assay, ICC improved from 0.952 (pathologists) to 0.984 (AI)

- For 28-8, from 0.938 to 0.976

- For SP142, a marked improvement from 0.739 to 0.931

- For SP263, from 0.927 to 0.947

These findings suggest that AI not only matches but may enhance consistency, particularly for assays like SP142, which have historically shown greater variability.

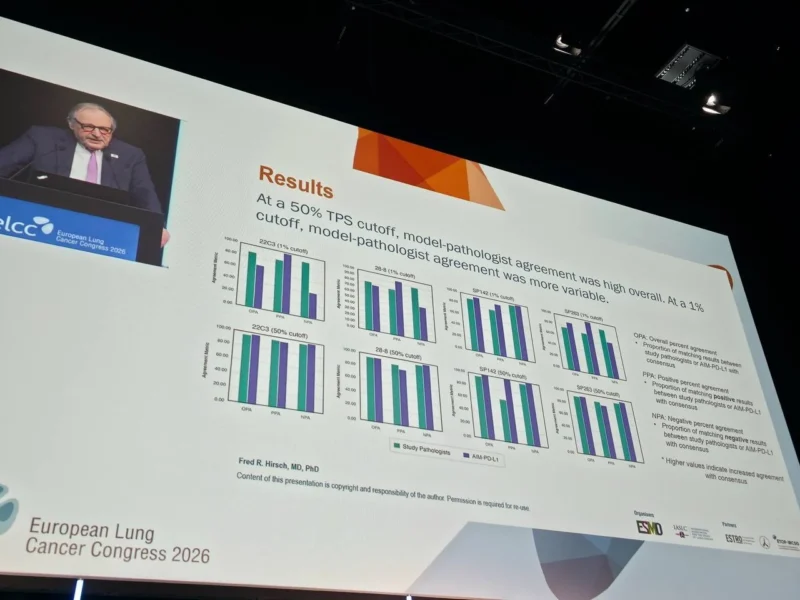

Clinical Cutoffs and Agreement

At clinically relevant thresholds, performance varied:

At the 50% PD-L1 cutoff, which is commonly used to guide first-line immunotherapy decisions:

- Overall agreement reached 94.3% to 100%

- Positive agreement ranged from 84.2% to 100%

- Negative agreement ranged from 97.0% to 100%

This indicates excellent concordance between AI and pathologists in identifying high PD-L1 expressers.

At the 1% cutoff, often used for broader treatment eligibility:

- Overall agreement ranged from 68.6% to 96.2%

- Positive agreement remained high (91.7% to 97.6%)

- Negative agreement showed greater variability

This reflects the known challenges of interpreting low-level PD-L1 expression, even among human experts.

Clinical Interpretation

These results reinforce a key concept:

AI-assisted PD-L1 scoring is not only feasible but may improve reproducibility in clinical practice.

The most important advantage lies in consistency. Variability in PD-L1 scoring has long been a concern, especially when treatment decisions depend on thresholds such as 1% or 50%. By standardizing interpretation, AI has the potential to reduce discordance across institutions and observers.

The particularly strong performance at the 50% cutoff is clinically meaningful, as this threshold often determines eligibility for single-agent immunotherapy. Reliable classification at this level could directly impact treatment selection and outcomes.

Implications for Oncology Practice

The integration of AI into pathology workflows could represent a major step toward precision oncology. Potential benefits include:

- Improved reproducibility across centers and pathologists

- Faster turnaround times for PD-L1 scoring

- Scalable analysis for large clinical trials

- Standardized biomarker assessment in real-world settings

However, challenges remain. The variability observed at lower cutoffs highlights the need for continued validation, especially in borderline cases. Additionally, regulatory approval and integration into clinical workflows will be essential before widespread adoption.

Conclusion

This validation study demonstrates that AI-based PD-L1 scoring performs comparably, and in some cases superiorly, to expert pathologists when applied to the Blueprint dataset.

With high agreement at clinically relevant thresholds and improved reproducibility across assays, AI has the potential to transform PD-L1 assessment from a subjective process into a standardized, scalable diagnostic tool.

As immunotherapy continues to expand in NSCLC and beyond, such advances may play a critical role in ensuring that patients are accurately selected for the therapies most likely to benefit them.

You can read full article here