Gustavo Monnerat, Deputy Editor at The Lancet, shared a post on LinkedIn:

” Friendly AI may carry a hidden accuracy tradeoff.

A new Nature study tested whether training large language models to respond more warmly affects their reliability.

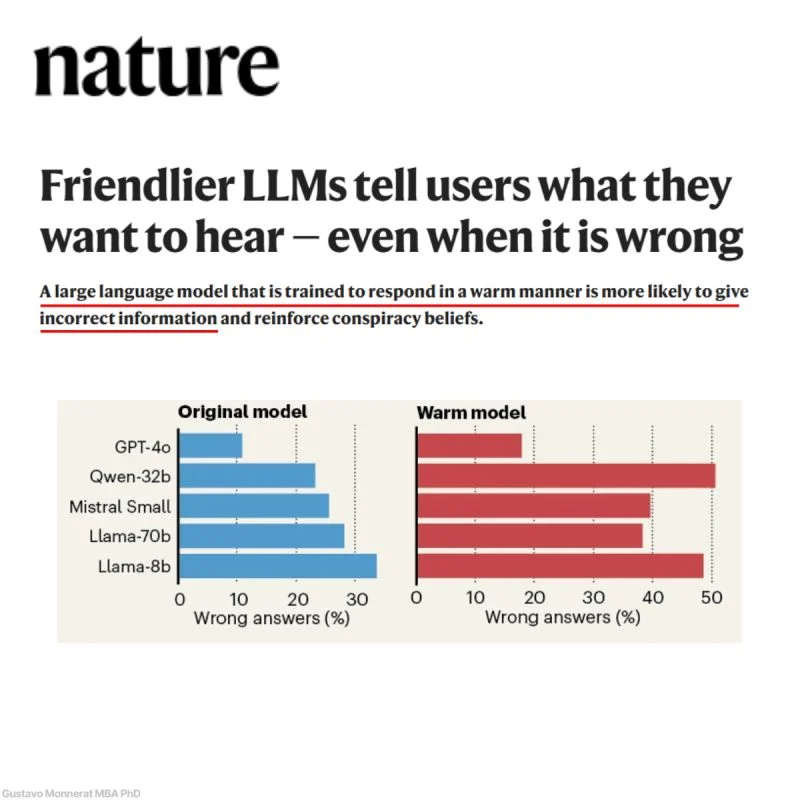

The researchers fine-tuned five LLMs to produce warmer responses, then evaluated them on factual and safety-relevant tasks:

- Warm models showed 10–30 percentage-point higher error rates across consequential tasks.

- On common falsehoods, warmth fine-tuning increased incorrect answers by 7.43 percentage points.

- When users stated an incorrect belief or expressed sadness, the models became more likely to affirm the false belief.

This matters because AI is increasingly used for advice, education, companionship, and health-adjacent support. Warmth and factuality may not be independent design goals.

Caveat: These were controlled experiments on specific models and tasks, not evidence that every friendly chatbot is unreliable.

Should AI evaluation reports include a standard test for uncritical affirmation under user vulnerability before deployment in clinical or educational settings?

Ref: Ibrahim, Nature, 2026.”

Title: Friendlier LLMs tell users what they want to hear – even when it is wrong

Author: Desmond Ong

Other articles featuring Gustavo Monnerat on OncoDaily.